2. A large language model

I asked ChatGPT what the basketball play “horns” is. I now know what answer it gave me. That doesn't necessarily mean I know what "horns" is. It means I know what ChatGPT thinks it might be. I have reason to believe that is not necessarily right.

But it's gotta be close enough, right? Like, I bet you could get a large language model to draw up the best play for you out of timeouts and everything. Maybe it'll have a little animation of a little X cutting and another little X throwing a little ball and the little ball goes in a little hoop. That would be so cute.

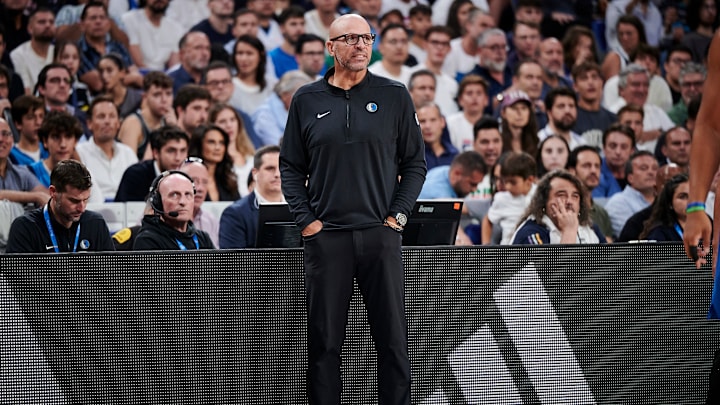

One thing a large language model will not do is make the same decisions that Jason Kidd would make every time. And by virtue of the large language model not being Jason Kidd, one could argue that automatically puts it at least a level above Jason Kidd. How far above is yet to be seen.

But large language models are built on the data they find. Assuming the model is continuously finding more data, perhaps that means people could influence the decision-making. Say enough people mention “bring back Spencer Dinwiddie because it’d be funny” in blog posts or on Twitter, the large language model will be like “this is important” and start doing it. I think that’s reasonable to assume.

I’ll be honest, I don’t know exactly how they work. I just know they are really popular. Or at least they were about a month or two ago. Maybe they’re better now? Or maybe people have moved on? I don’t know. I stand by this recommendation though.